Spring Initializr and Continuous Generation

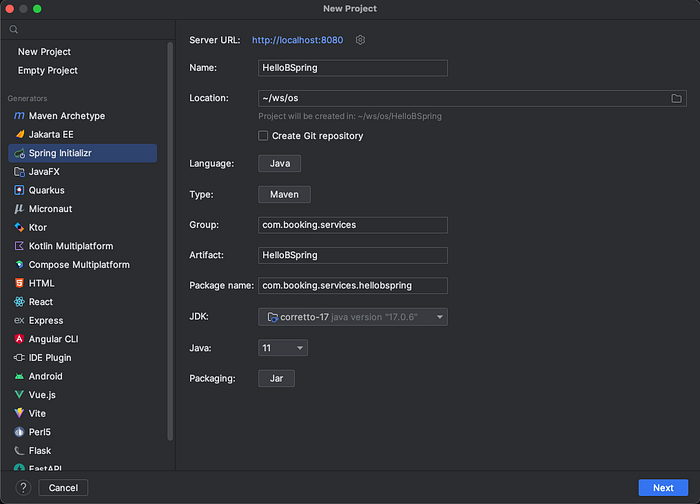

You think you know spring framework pretty well and suddenly you find yourself in the ocean of this other spring project which is just awesome! I’m talking about Spring Initializr project, the black magic behind start.spring.io and the service IntelliJ uses (see the screenshot👆) to create new spring projects. Like many other Spring project, this too is an open source project of course, and you can (and actually should) customise it based on your organisation requirements.

TL;DR

Do you love using start.spring.io? Did you know you can create a version of this service based on your organisation’s needs? Moreover, you could also make sure everyone will continue to benefit from your generator by a simple “trick”!

But why?!

A project in an organisation can just live for so long. As your organisation grows, product maturity grows with it, and at the same time, with a multiplier of N times probably, the technology also grows. This means your organisation’s “Vanilla Project” is evolving very fast; but how are you reflecting this change? Is it just a git project sitting in a repository that no one even looks? Is it a maven archetype? Is it a yo generator? Or [drum roll] is it a spring initializr service? And more importantly, how do you keep the teams involved in what’s changing in the Vanilla Projects?

How does Spring Initializr work?

Spring Initializr essentially just provides an endpoint (/starter.zip) which takes a bunch of parameters and will generate a project based on those. These parameters include information about the project (such as group, artifact, base package, etc.) and also project’s dependencies (such as JPA, Kafka, TestContainers, etc.).

Customisable Initializr

Initializr on its own is very customisable, just take a look at start-sit and see for yourself. This is the actual service that is consumed by start.spring.io UI to generate the final project. For example, you can take a look into the configuration file for this service, and you will already see where those dependencies and their information (like description, version, BOM, etc.) come from.

Generation logic

Spoiler alert! I don’t want to ruin the fun of looking through the code to figure this out for you, so I encourage you to look for yourself and see how different generate logic is applied when a certain dependency is requested.

The answer is, they use a new application context (ProjectGenerationContext) for each generation request. This allows you then to define beans which will be loaded if a certain condition is met with the request. For example, this configuration will be loaded only if the project was requested to use Gradle build.

Continuous Generation

Of course, it’s fun to generate a project using this tool, but it’s not enough as we said before. Some of the possible cases could be

- Change of dependencies: you want to publish some events on Kafka from now on, so you now depend on Kafka.

- Breaking changes in libraries: How do you introduce a breaking change in a library? Following the best practices, you’d probably deprecate the old way, add the new way, and on the next major version release, remove the “old way”. But how do people know when and how they should make the switch? Continuous generation can help you reflect these changes for organisation’s projects. We have a generated sample which comes along in the generated project based on the dependencies that were selected. For example, if you have selected JPA, a “bike booking shop” will be generated for you which is taking advantage of the libraries we have. Now if we want to make a breaking change in our JPA libraries, we will show you how to migrate in the sample code for the bike booking shop.

- Introduce new features: Very similar to the previous point, you could also announce new features in your libraries through this. For example, if there’s a new way of creating tests, that can also be shown on the generated sample project.

In order to have these benefits, you will need to re-generate your project periodically and check “what’s new”. And how do we do that you ask? I implemented this for a community initializr service in our company recently. I used git and gitlab pipelines to create a “service” that will work like dependabot. The main idea is, we’ll have a separate protected branch only for the generator with no history of what happened after project was generated). This branch will only get updated when there’s a change in the “generated project”. If such changes are detected, a new MR will als be created.

Be aware that most probably, you can’t really merge the generator branch to main because there will always be conflicts! So the MR is mainly there to notify you about a change in the generated project. At this point, you will have to branch off from the generator branch (remember we shouldn’t ever merge to the generator branch!), update it with main, resolve the conflicts (the main goal) and then merge this branch back to main (so you will know, which version of the generated project you are “using” on main). While going through conflicts, pay attention to what has changed, and remember, no matter how tedious this job is, it should be better than going through docs and reading what has changed and what you need to do!

But like how?

You know the idea, but how can you make this happen? Well, you need a pipeline that will generate your project periodically. This means our Initializr should generate a “regeneration script”, a pipeline job to run this script, and then a configuration to run this pipeline periodically. The regeneration script will call our Initializr API with the “expected parameters”. These parameters are exactly the same parameters that were called to generate the current project.

Now we want to call this script to re-generate the project on our pipeline. I’m using GitLab pipelines for this, but any pipeline should work similarly. One other “workaround” I used here was, I didn’t want to spend time on running Initializr as a service in our company, so I’m running its docker image as a GitLab Service, and the generator script will send the request to this docker container.

As we mentioned before, this pipeline is supposed to regenerate the project and then if there are any changes, commit & push those changes and create a new merge request. So it will need write permissions to our repository.

re-generate-job:

image: ... # an image which can run the regenerate script, for example curlimages/curl

services:

- name: test-amir-initializr:release

alias: initializr # regenerate script will call this as a host

tags:

- docker

before_script: # (1)add deploy token's private key to ssh

- eval $(ssh-agent -s)

- echo "$SSH_PRIVATE_KEY_TOOLKIT" | tr -d '\r' | ssh-add - > /dev/null

- mkdir -p ~/.ssh

- chmod 700 ~/.ssh

- ssh-keyscan $CI_SERVER_HOST >> ~/.ssh/known_hosts

- chmod 644 ~/.ssh/known_hosts

script:

# (2) Pull repo

- echo "Pulling repo into build"

- ssh git@$CI_SERVER_HOST

- git clone git@$CI_SERVER_HOST:$CI_PROJECT_PATH.git workspace

- cd workspace

- git checkout $CI_COMMIT_REF_NAME

# (3) regenerate the project

- chmod +x ./regenerate.sh

- ./regenerate.sh

# Push repo changes into current repo

- git add .

- git config user.email "initializr-bot@example.com"

- git config user.name "Initializr Bot"

# (4) commit, push and create a MR if there are any changes

- if [[ `git status --porcelain` ]]; then git commit -m "project regenerated"; git push origin HEAD:$CI_COMMIT_REF_NAME -o merge_request.create; fi;

rules:

# (5) this job is only executed on scheduled pipelines

- if: $CI_PIPELINE_SOURCE == "schedule"On the pipeline above, you see:

- I used this post to add our generator’s service account’s SSH private key.

- The project will be cloned

- Regenerate script will be called

- If there are any changes, commit, push and create a new merge request from this branch to the default branch.

- This job should only be executed on scheduled pipelines

As simple as that! Let’s say this job is running weekly on all the generated projects in your organisation. So if you make a change to the Initializr, and publish the new docker image, everyone who is affected by your changes should get a new merge request by next week reflecting your changes.

Conclusion

Define a custom Spring Initializr based on your organisation’s needs, it’s very easy! Next and more importantly, try to make a habit of “Continuous Generation” for the teams which use your libraries. This will then give you a powerful tool to have some control on consumer repositories and it also helps you more easily add value in the future, because a project generated in the past will also benefit from your changes.